There are various large language models (LLMs) available including ChatGPT, BARD and Cohere.

Anyone who designs or develops a new chatbot will know that their first job is to define what “intents” the chatbot has to support. An intent can be anything from asking a question, submitting expenses or providing feedback.

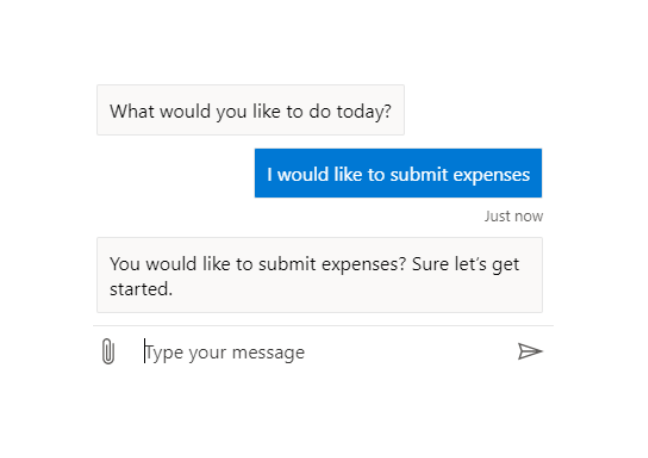

Typically, we train a chatbot to learn how to understand a user’s intent simply by supplying lots of training examples. For example, for submitting expenses we would provide examples such as:

- I would like to submit expenses

- submit expenses

- I want to submit expenses

Once the user’s intent has been determined, the relevant business process can be initiated:

This all works fine where the business process (intent) is pretty obvious. It doesn’t take long to train a chatbot to understand the difference between “submit expenses” and “submit timesheets”.

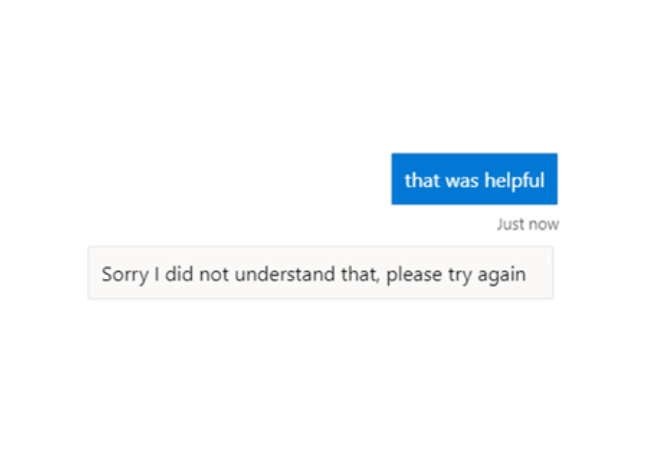

There is a problem however when it comes to intents that are more associated with general chit-chat where users want to express gratitude, provide feedback, make a suggestion or even say something rude:

In these situations, it is quite a challenge to provide sufficient training examples. How can you list all the ways in which a user can express gratitude or say something insulting?

Sometimes this is so much of a challenge that developers resort to other techniques such as insisting users state the word “Feedback“ at the beginning of any message where they want to provide feedback. Obviously, this is not as desirable.

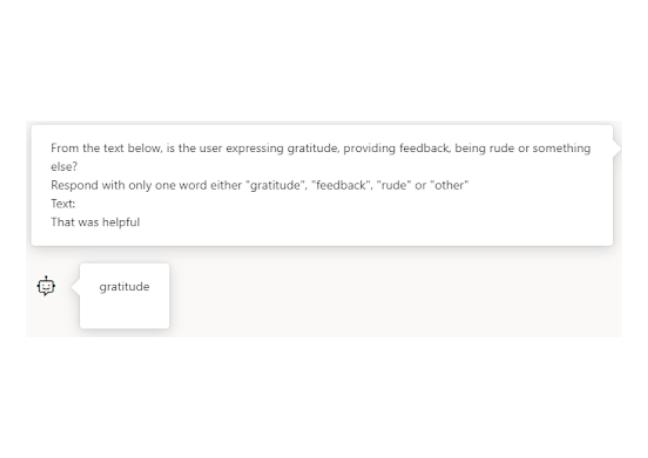

This is where LLMs can save the day!

Using an API call, we simply pass the user’s question over to a LLM and ask the LLM to determine the user’s intent. This will normally involve “prompt engineering” to make sure the LLM only comes back with one of the allowed responses.

As an example, here’s a prompt you could use to find out if the text “that was helpful” was meant as gratitude, feedback, rudeness or something else:

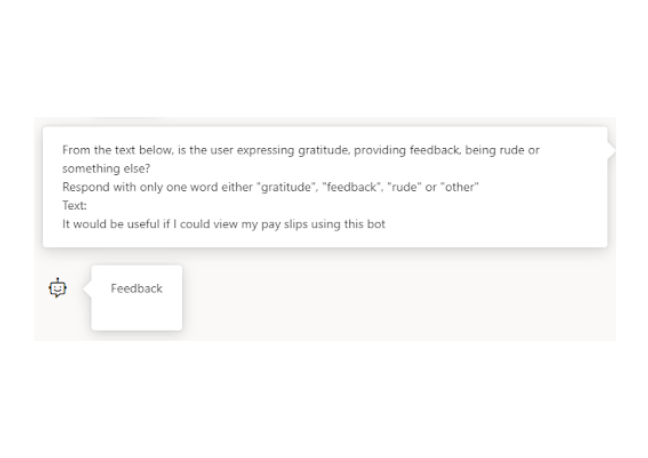

And if the user provides feedback such as “It would be useful if I could view my pay slips using this bot”:

In summary, a simple API call to an LLM can:

- save many hours of chatbot training and testing

- improve user experience by instinctively understanding a wide range of user intents

This is the first article in a three-part series on large language models (LLMs). Take a look at how LLMs can greatly improve the success of “knowledge” bots and how LLMs can be used to validate a chatbot’s response.

Using large language models with knowledge bots

In the second of our large language model series, we explain how LLMs can greatly improve the success of “knowledge” bots.

Read more

Using large language models to validate a chatbot’s response

In the third of our large language model series, we explore how LLMs can be used to validate a chatbot’s response.

Read moreOur recent insights

Transformation is for everyone. We love sharing our thoughts, approaches, learning and research all gained from the work we do.

Unlocking the benefits of AI for charities

How human-AI collaboration can help charities get true value from their data, turning insights into impact.

Read more

What's the future for open data in the UK?

A decade ago, the UK was a leader in open data, but its prominence has faded. We examine why the focus has shifted and what the future holds for the role of open data in the public sector.

Read more

The National Data Library: A new plan for joined-up government data

Will the National Data Library finally succeed in joining up government data? We examine the real organisational barriers and their potential role as enablers of data spaces.

Read more